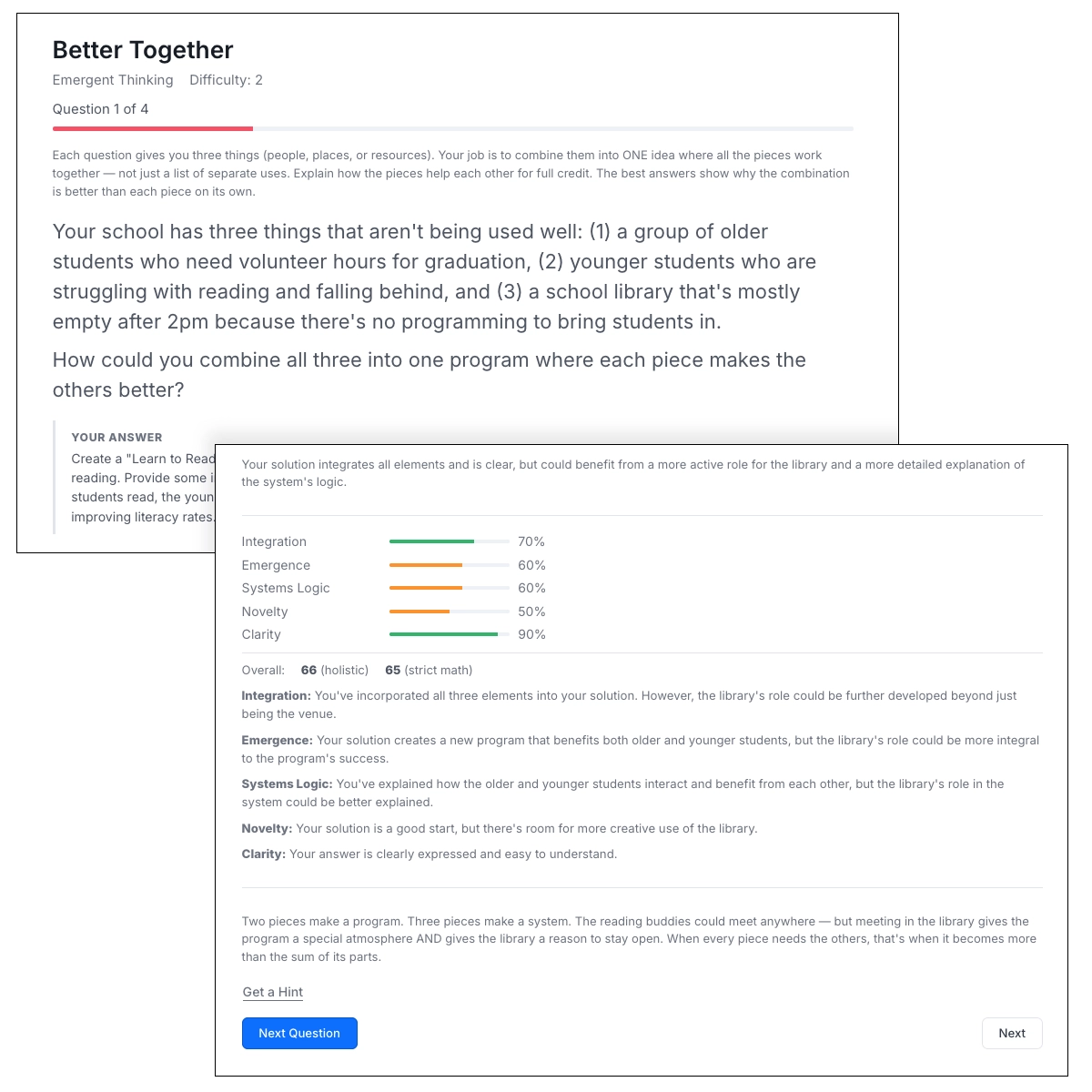

Multi-axis AI scoring

Every free-text answer is evaluated by AI across multiple dimensions. You get partial credit, axis-by-axis feedback, and XP proportional to how well you reason — not just whether you guessed right.

Core Scoring Axes

Every puzzle is scored on these three dimensions. Together they measure not just whether you got the right answer, but how well you thought about it.

Answer correctness

Did you identify the right solution? The AI compares your answer against the creator's model answer. Getting close still earns partial credit — this isn't pass/fail.

Reasoning quality

Did you explain why your answer is correct? The AI looks for logical chains, step-by-step thinking, and sound argumentation — not just the final conclusion.

Specificity

Did you anchor your reasoning in concrete details? Citing specific elements from the puzzle, naming the exact principle, or giving a precise example all score higher than vague generalities.

Puzzle-based Scoring Axes

More complex puzzles — especially those built on structured frameworks — add one or two additional scoring axes that measure the specific type of thinking the puzzle is designed to train.

Structural framing

Used in systems thinking puzzles. Did you diagnose at the level of feedback loops, incentives, and constraints — or did you blame individuals and describe surface events? Event-level thinking earns zero here, even if you named the right rule.

Adjacency

Used in lateral thinking puzzles. How far did your answer move beyond the obvious frame while staying plausible? The best answers are genuinely surprising yet defensible — not random, not the first thing anyone would think of.

Emergence

Used in emergent thinking puzzles. Does your combination of ideas produce something genuinely new — a capability or behaviour that none of the individual parts could achieve alone? The whole should be greater than the sum of its parts.

See scoring in action

Try a puzzle and watch AI evaluate your reasoning in real time.